Gartner expects over 40% of agentic AI projects to be canceled by the end of 2027. The reason isn’t that agents don’t work. It’s that most enterprises are deploying them in places they don’t belong, with architectures that can’t support them, against business cases nobody is pressure-tested.

Across agentic AI workflows we’ve built and salvaged at Ariel, the same pattern shows up in almost every failed program. A team gets excited about an autonomous demo, scopes a production rollout, and discovers six months in that the agent is fine but the operating environment around it isn’t. The data is fragmented. The integrations don’t expose the right authority. The governance isn’t designed for non-deterministic systems. And nobody scoped what happens when the agent makes a confident mistake.

The enterprises that are getting real value from autonomous AI agents aren’t the ones with the best models. They’re the ones that designed the operating environment first and matched the architecture to it.

Key Takeaways

- 40% of agentic AI projects will be canceled by 2027, mostly due to architecture and governance gaps, not model failure.

- Only ~10% of organizations are scaling AI agents in any given business function. The gap between pilots and production is structural.

- Multi-agent systems make up 66% of enterprise deployments, but only when paired with a clear orchestration layer.

- The right pattern depends on workflow type. Deterministic processes don’t need agents. Probabilistic, multi-step ones do.

- Governance, observability, and human-in-the-loop checkpoints are architecture choices, not phase-two features.

- MCP, LangGraph, and AutoGen are the three frameworks reshaping agentic system design in 2025-2026.

- ROI comes from enterprise productivity, not individual task augmentation. Scope at the process level, not the prompt level.

Why Most Agentic AI Programs Stall

The hype curve makes this look like a model problem. It almost never is.

Gartner’s June 2025 research breaks down the failure pattern clearly. Of the 3,412 enterprises polled in January 2025, 19% had made significant agentic AI investments, 42% had made conservative ones, and 31% were still in wait-and-see mode. The kicker: of thousands of agentic AI vendors in the market, Gartner estimates only about 130 are actually delivering genuine agentic capabilities. The rest are practicing what Gartner calls “agent washing,” repackaging chatbots, RPA, and AI assistants as autonomous systems they aren’t.

On the buyer side, McKinsey’s 2025 State of AI report adds the second half of the picture. 88% of organizations now use AI in at least one business function, but only about 10% are scaling AI agents in any given function. Only 6% qualify as AI high performers generating measurable EBIT impact. The gap between adoption and scaling is the entire problem.

Read those numbers together and the diagnosis is structural. The technology works. The vendor market is noisy. The enterprises that move agentic AI workflows from pilot to scale are doing four things differently:

- They pick the right workflows

- They design for observability

- They build governance upfront

- They treat agents as part of an operating model, not a feature.

- The ones that don’t, build expensive demos that never make it to production.

When Agentic Is the Wrong Tool

The hard truth is that many use cases positioned as agentic today don’t need agents at all.

The decision rule we apply with clients is straightforward. If the workflow is deterministic, repeatable, and the rules are knowable upfront, classic automation or RPA outperforms agents on cost, reliability, and audit. If the workflow is probabilistic, multi-step, and requires reasoning across ambiguous inputs, agents earn their keep. Mixing the two is how programs get expensive without delivering value.

Use deterministic automation when:

- Steps are fixed and rules don’t change frequently (invoice routing, payroll, scheduled reports)

- Audit and compliance demand exact, predictable execution

- Cost per transaction matters more than flexibility

- Human judgment isn’t required between steps

Use agentic AI when:

- The task requires reasoning across multiple data sources

- Inputs are unstructured (emails, documents, unstructured tickets)

- The right next step depends on context, not a fixed rule

- Composite processes span multiple systems and require negotiation between them

Gartner makes the same point in their decision framework. In scenarios that demand strict step ordering, identity checks, regulatory disclosures, or zero deviation, deterministic approaches outperform agents. Picking the wrong tool here doesn’t just cost money. It builds organizational distrust in agents that takes years to recover from.

The Architecture Patterns That Actually Hold Up

Once you’ve confirmed the workflow needs agents, the next decision is architecture. Across enterprise AI automation projects, four patterns separate production-grade systems from prototypes.

1. Multi-agent orchestration over single-agent design

66.4% of enterprise agentic implementations use multi-agent system designs, and the reason is operational, not architectural. Single agents struggle with long context, mixed objectives, and cross-domain reasoning. Splitting work across specialized agents (a planner, an executor, a reviewer) produces better outcomes because each agent stays inside the context window where it performs well. Frameworks like LangGraph, AutoGen, and CrewAI are now the standard tooling for this, with each handling orchestration, agent-to-agent handoffs, and human-in-the-loop checkpoints differently.

2. MCP as the integration layer

Model Context Protocol, originated by Anthropic and now adopted across vendors, is the connective tissue for autonomous AI agents in production. MCP exposes business systems (CRMs, ERPs, ticketing tools, data warehouses) as standardized interfaces agents can call without bespoke integration work for each one. We’ve covered how MCP and vector stores reshape enterprise AI, and the operational point is the same in every project: without a standardized integration layer, multi-agent systems devolve into custom glue code that breaks at the second integration. With MCP, the same agent talks to Salesforce, ServiceNow, and your data lake through one protocol.

3. Plan, execute, review loop

The pattern that consistently outperforms in production is plan-execute-review. The planner decomposes the task. The executor runs each step with tool calls. The reviewer validates the output before it commits to a system of record. This is the pattern Microsoft’s AutoGen formalizes, and it’s what separates an agent that ships work from a chatbot that suggests work. It also gives you natural checkpoints for human approval on actions that touch revenue, compliance, or customer data.

4. Observability and audit from day one

Agents are non-deterministic. Two runs of the same task can produce different paths, different tool calls, different outcomes. That’s a feature, not a bug, but it makes traditional observability inadequate. You need distributed tracing for every agent action, structured logs of every tool call, version-controlled prompts and instructions, and audit trails that reconstruct decisions for compliance review. Building this in advance is 5 to 10 times cheaper than adding it after the first incident.

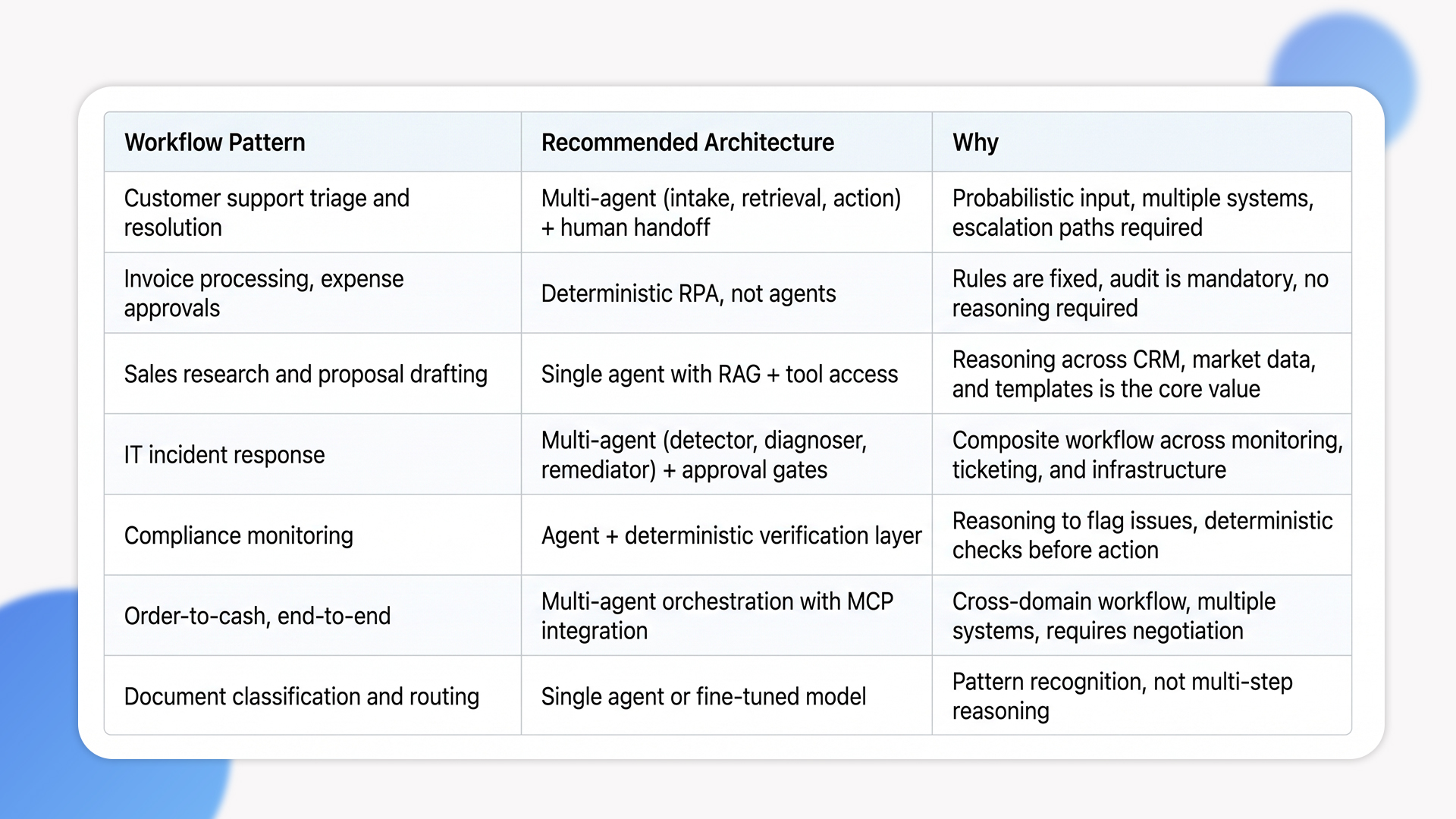

Mapping Workflows to Patterns: A Decision Lens

Different workflows justify different architectures. The table below summarizes how we map common enterprise pain points to the right enterprise AI automation pattern in client engagements.

The Trade-offs Most Programs Underestimate

These are the cost lines that don’t appear in the original business case but determine whether the program lands.

Data readiness is the longest pole

Agents are only as good as the data they can reach. Most enterprises discover during their first agentic build that the data they assumed was “available” is fragmented across systems, inconsistently labeled, missing context, or governed by access controls that don’t translate to agent-level authentication. The remediation work isn’t optional. We typically spend 30 to 40% of an agentic project budget on data preparation and integration work before any agent code ships, and that ratio is what separates programs that deliver from ones that stall.

Authentication and authorization at agent level

In a multi-user environment, an agent acting on behalf of one user cannot inherit blanket access to enterprise systems. It needs scoped, delegated permissions per action, with audit trails that link every tool call back to the originating user. This is harder than it sounds. Most enterprise SSO and IAM stacks weren’t designed for non-human actors. Getting this right requires either a runtime authorization layer (Arcade, Workato, custom MCP servers) or significant identity-system rework. Programs that skip this discover compliance problems during their first audit.

Drift and evaluation

Models change. Prompts get updated. Tools get added. Without continuous evaluation against a golden dataset of known-good outputs, agent quality degrades silently. Production-grade agentic AI workflows require evaluation harnesses that run on every change, monitoring dashboards for accuracy and latency, and rollback paths when quality drops. This is the operating cost most teams forget to budget for.

Human-in-the-loop design

Agentic systems work best when humans intervene at strategic points, not at every step. The Deloitte concept of “agent supervisors,” humans who handle exceptions requiring judgment, is operationally accurate. Get that wrong and either the agent ships errors unchecked, or the supervisor becomes a bottleneck that erases the productivity gain.

Long tail of edge cases

The 80% case is easy. The remaining 20% is where projects break. Customer queries that mix three intents. Tickets that contain PHI mid-conversation. Data sources that go offline mid-task. Tool outputs that come back malformed. The robustness of an agentic system isn’t measured by how well it handles common cases. It’s measured by how it fails when something unexpected happens. Plan for graceful degradation, not just happy path execution.

When Building Agentic Workflows Is the Wrong Call

Not every team should be building agentic systems right now. Here is when we tell clients to wait.

- Your data foundation isn’t ready

If your data is fragmented, inconsistently labeled, or locked in silos that require political effort to unlock, building agents on top accelerates the underlying problem rather than solving it. Fix data quality and access first. The agent program will land cleanly when the foundation supports it.

- Your governance and risk model is built for deterministic systems

If your compliance, audit, and risk teams have never approved a non-deterministic system in production, the time to engage them is before the build, not at the launch review. Programs that skip this discover their architecture is acceptable to engineering but not to legal.

- Your business case rests on individual task savings, not enterprise productivity

Saving 5 minutes per ticket across a 10-person team is not the same business case as cutting cycle time on order-to-cash by 30%. The first scopes as a tool. The second scopes as a transformation. Conflating them is how budgets get spent on autonomous AI agents without unlocking the larger value.

How Ariel Approaches Agentic AI Programs

We don’t lead with model selection or framework recommendations. We start with workflow analysis: what’s the process, who owns it, what’s the failure cost, and what’s the data and integration reality around it.

The architecture decision falls out of that analysis. Some workflows get deterministic automation. Others get a single agent with retrieval-augmented generation. The hardest ones get multi-agent orchestration with MCP integration, plan-execute-review loops, and human checkpoints at compliance-sensitive moments. Across all of them, observability and evaluation get built in from the first sprint, not retrofitted before launch.

Across industries (logistics, healthcare, financial services, e-commerce) we’ve delivered agentic AI workflows that handle support triage, document processing, multi-system reconciliation, and compliance monitoring. The platform isn’t the differentiator. The decisions made during workflow analysis and architecture design are.

Planning an agentic AI program and want a delivery-grade architecture review, not a vendor pitch?

Our team has scoped and shipped multi-agent systems across logistics, healthcare, and financial services. We’ll review your workflow, your data foundation, and your integration map, then give you an honest read on whether agentic is the right pattern.

Frequently Asked Questions

1. What’s the difference between agentic AI and traditional automation?

Traditional automation follows fixed rules and produces deterministic outputs. Agentic AI workflows handle probabilistic, multi-step tasks that require reasoning across ambiguous inputs. The right pattern depends on the workflow. Deterministic, repeatable processes are better served by RPA. Composite, judgment-heavy processes are where agents earn their keep.

2. How long does it take to build a production-grade agentic workflow?

Single-agent workflows with RAG and tool access typically run 3 to 5 months. Multi-agent orchestration with MCP integration and governance layers run 6 to 12 months. The biggest variable isn’t the agent code, it’s the data preparation, integration work, and authorization layer that has to land before the agent ships.

3. What ROI is realistic from agentic AI in the first year?

BCG reports 20-30% faster workflow cycles and 25-40% reduction in low-value work time across early adopters. The realistic targets in year one are cycle-time reduction on a specific workflow, not enterprise-wide transformation. Programs that scope at the process level deliver. Ones scoped at the “AI everywhere” level usually don’t.

4. Which industries see the strongest results from agentic AI?

Financial services (compliance, fraud detection), customer operations (support triage), IT services (incident response), and supply chain (cross-system orchestration) consistently show the highest returns. The deciding factor isn’t industry, it’s whether the workflow is composite, multi-system, and tolerates a human-in-the-loop checkpoint.

5. Can Ariel handle agentic system delivery end-to-end?

Yes. We cover workflow analysis, architecture design, data preparation, multi-agent orchestration, MCP integration, observability tooling, and evaluation harnesses. Get in touch to scope your program.

The Decision Behind the Decision

Agentic AI workflows aren’t a feature you add to your stack. They’re an operating model change that requires data, integration, governance, and architecture decisions to land together. The 40% of programs that fail by 2027 will fail because they treated it as the former. The ones that succeed will be the ones that scoped it as the latter.

Pick the workflows where autonomous AI agents earn their cost. Build the data foundation before the agent. Design observability and governance in from day one. Treat humans as supervisors at strategic checkpoints, not bystanders. Scope ROI at the process level, not the prompt level. The architecture follows from those decisions, not the other way around.

Ready to scope an agentic program built on delivery reality, not vendor templates?

Book a free consultation with Ariel’s AI team. We’ll map your workflow, assess your data and integration foundation, and design an architecture that holds up in production, not just in demos.